There’s a comparison people don’t like to make—but probably should: artificial intelligence and guns.

At first glance, they seem worlds apart. One is software; the other is hardware. One lives in data centers; the other in holsters and safes. But at their core, both are immensely powerful tools that amplify human capability. And that amplification is exactly where the tension begins.

Tools Don’t Have Intent. People Do.

A gun doesn’t decide whether it’s used for self-defense or violence. AI doesn’t decide whether it’s used to cure diseases or generate deepfakes. In both cases, the tool is neutral. The outcomes depend entirely on the person using it.

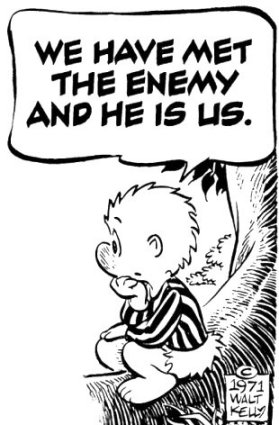

That’s uncomfortable, because it means the real problem isn’t the technology. It’s us.

We’ve seen this play out for decades with firearms. Responsible owners use them safely, train with them, and store them properly. Others misuse them, sometimes with devastating consequences. The same duality is already emerging with AI. Some people are building tools that improve productivity, education, and healthcare. Others are using it for scams, misinformation, or exploitation.

The pattern is identical: power reveals character.

The “Ban It” Reflex

Whenever a powerful tool causes harm, the instinctive response is to restrict or ban it. With guns, that debate has been ongoing for generations. With AI, it’s accelerating fast.

But there’s a fundamental problem with this approach: restrictions don’t eliminate capability—they just redistribute it.

If you heavily regulate AI development or access, you don’t make the technology disappear. You just concentrate it in fewer hands—governments, large corporations, or bad actors who ignore the rules entirely. Meanwhile, everyday people lose access to tools that could help them defend themselves, compete, and innovate.

It’s the same logic behind the old argument: if only the “bad guys” ignore the rules, then the rules don’t level the playing field—they tilt it.

Asymmetry Is the Real Risk

The real danger isn’t that AI exists. It’s that access to AI becomes uneven.

Imagine a world where malicious actors use AI for automated scams, identity theft, or disinformation campaigns—but regular people are restricted from using AI tools that could detect, counter, or defend against those threats.

That’s not safety. That’s vulnerability.

Just like with physical security, the balance matters. A society where only a small group controls powerful tools is inherently fragile. It creates dependency and reduces individual agency.

Defense vs. Dependence

One of the strongest arguments in the gun debate is that self-defense matters. Whether or not someone agrees with that position, the underlying principle is clear: people want the ability to protect themselves rather than rely entirely on external systems.

AI introduces a similar dynamic.

- AI can help individuals detect fraud, analyze information, and protect their privacy.

- It can act as a personal assistant, advisor, or even a defensive layer against manipulation.

- It can level the playing field between individuals and large institutions.

But if access to AI is restricted in the name of safety, individuals may lose those capabilities. They become more dependent on centralized systems—and those systems don’t always act in their best interest.

Misuse Is Inevitable

Here’s the hard truth: any powerful tool will be misused. That’s not a design flaw—it’s a human constant.

Trying to eliminate misuse entirely is like trying to eliminate risk entirely. It sounds good, but it leads to overcorrection—policies that restrict legitimate use more than they prevent harm.

The question isn’t “How do we stop bad uses completely?”

It’s “How do we empower good uses while mitigating the worst outcomes?”

That’s a much harder problem. It requires nuance, education, and accountability—not just prohibition.

The Tradeoff We Don’t Want to Admit

Every increase in safety through restriction comes with a tradeoff in freedom, capability, or resilience.

With guns, that tradeoff is debated endlessly. With AI, we’re just beginning to face it.

Do we prioritize centralized control and reduced risk?

Or do we prioritize individual empowerment, even if it comes with messiness and danger?

There’s no perfect answer but pretending there is leads to bad decisions.

A More Honest Approach

If AI follows the same trajectory as other powerful technologies, a few things are likely true:

- It will become widely accessible, regardless of restrictions.

- It will be used for both good and bad purposes.

- Attempts to fully control it will fail—or create unintended consequences.

So the better path might not be strict limitation, but widespread empowerment paired with responsibility:

- Teach people how AI works and how it can be misused

- Build tools that help individuals defend against AI-driven threats

- Encourage transparency rather than secrecy

- Focus on accountability for harmful actions, not just access to tools

Final Thought

AI, like guns, forces us to confront an uncomfortable reality: technology doesn’t create human nature. It amplifies it.

Trying to solve that by limiting the tool is tempting, but incomplete.

Because in the end, the real question isn’t whether people should have access to powerful tools.

It’s whether we trust individuals with power—or whether we believe that concentrating that power elsewhere somehow makes us safer.

History suggests that answer is far from simple, but *almost* always has the same result.

Leave a Reply