/** AI used to format this article and clean up typos **/

The Prompt That Changes Things

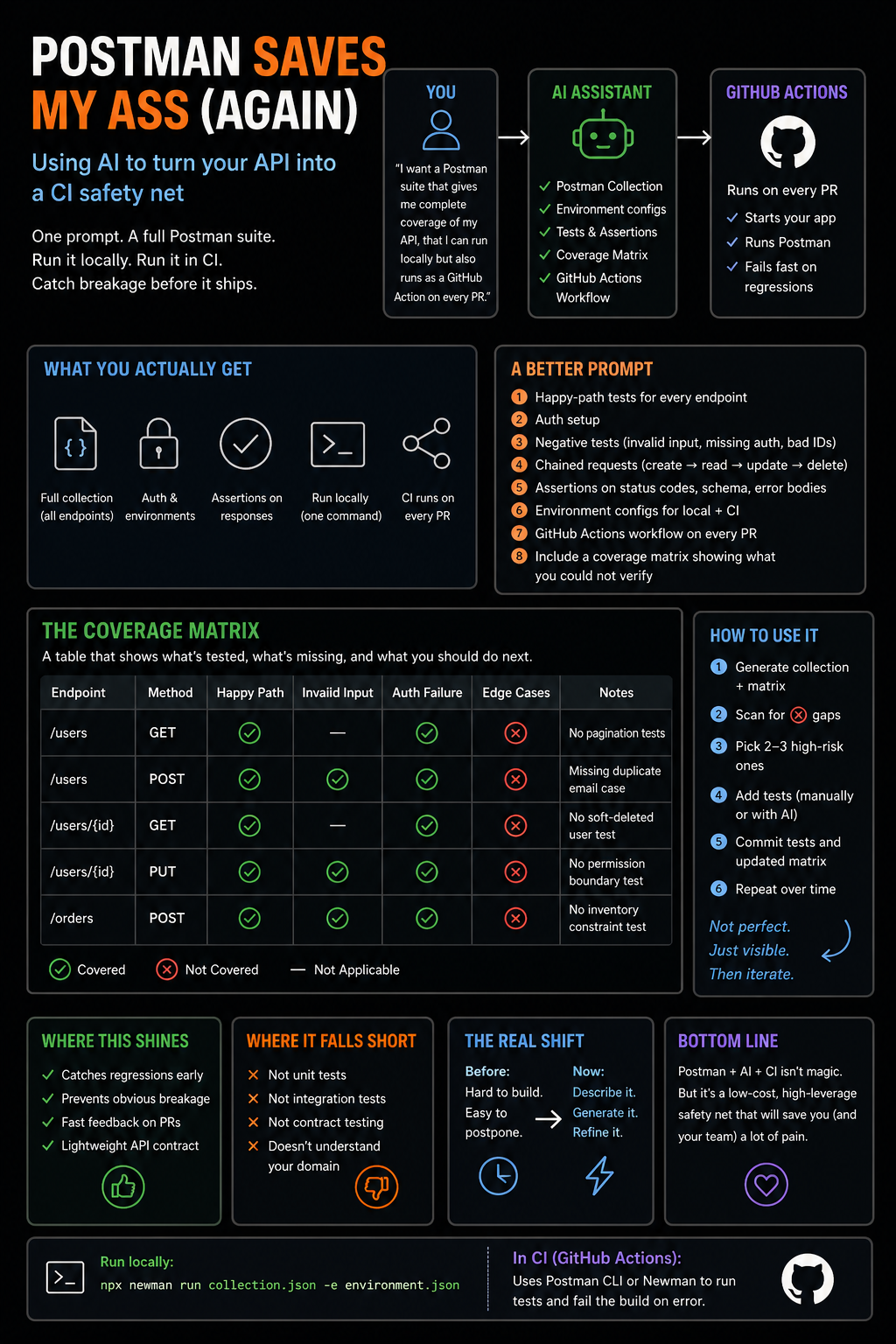

If you’ve got an API, try telling your AI this:

“I want a Postman suite that gives me complete coverage of my API, that I can run locally but also runs as a GitHub Action when anyone makes a PR.”

That one sentence can bootstrap:

- A full Postman collection

- Environment configs (local + CI)

- Test assertions

- A CI workflow that runs on every PR

In a couple minutes, you go from “manual testing” to automated regression protection.

That’s not trivial.

What You Actually Get

A decent AI-generated setup usually includes:

- Happy-path tests for every endpoint

- Basic auth handling

- Assertions on status codes and response shapes

- Environment variables for portability

- A CI runner (Postman CLI or Newman)

And suddenly:

- You can run everything locally with one command

- Every PR gets validated automatically

- You catch obvious breakage immediately

That alone is worth it.

The Problem With “Complete Coverage”

Here’s where people get sloppy.

“Complete coverage” sounds great. It’s also misleading.

AI can give you endpoint coverage.

It cannot infer business logic correctness.

It doesn’t know:

- What “valid” actually means in your domain

- Which edge cases matter

- What invariants your system depends on

- Where your real bugs tend to hide

So yes, it will test:

GET /users/123 → 200 OK

But it probably won’t test:

- Soft-deleted users

- Permission boundaries

- Race conditions

- Data consistency across flows

If you treat generated tests as “complete,” you’re setting yourself up.

The Better Prompt

If you want something actually useful, tighten the ask:

Create a Postman collection with:

- happy-path tests for every endpoint

- auth setup

- negative tests (invalid input, missing auth, bad IDs)

- chained requests (create → read → update → delete)

- assertions on status codes, response schema, and error bodies

- environment configs for local + CI

- a GitHub Actions workflow that runs on every PR

And then add the most important line:

Include a coverage matrix showing what you could not verify.

That line changes everything.

The Coverage Matrix Is the Whole Game

A coverage matrix is just a table that answers:

- What endpoints exist?

- What scenarios are tested?

- What scenarios are missing?

Example:

| Endpoint | Method | Happy Path | Invalid Input | Auth Failure | Edge Cases | Notes |

|---|---|---|---|---|---|---|

| /users | GET | ✅ | — | ✅ | ❌ | No pagination tests |

| /users | POST | ✅ | ✅ | ✅ | ❌ | Missing duplicate email case |

| /orders | POST | ✅ | ✅ | ✅ | ❌ | No inventory constraint test |

Most teams don’t have this.

They have tests—but no map of what those tests actually cover.

Why This Matters

If you don’t ask for a matrix, the AI will:

- Generate a bunch of tests

- Look comprehensive

- Miss entire categories of failure

And you won’t notice.

When you do ask for it:

- The AI is forced to enumerate scenarios

- Gaps become visible

- You get a checklist instead of a blob of code

You’ve turned:

“Generate tests”

into:

“Model the problem space—and admit what’s missing”

That’s a much better outcome.

The Real Value: Exposing Blind Spots

The most useful parts of the matrix are the ❌ columns:

- ❌ Edge cases

- ❌ Concurrency

- ❌ Data lifecycle issues

- ❌ Permission boundaries

Because those are the bugs that actually hurt.

AI will naturally cover:

- 200 responses

- Basic validation

- Auth presence/absence

It will not naturally cover:

- “User exists but is inactive”

- “Same request sent twice”

- “Inventory changed mid-request”

- “User can access something they shouldn’t”

The matrix makes that painfully obvious.

How to Use It (Without Overthinking It)

Treat the matrix as a work queue, not documentation.

A simple loop:

- Generate collection + matrix

- Scan for ❌ gaps

- Pick 2–3 high-risk ones

- Add tests (manually or with AI)

- Commit both tests and updated matrix

Repeat over time.

You don’t need perfection. You need visibility and iteration.

Where This Actually Shines

This setup is not a replacement for real testing.

It is extremely good at:

- Catching regressions early

- Preventing obvious breakage from shipping

- Giving fast feedback on PRs

- Acting as a lightweight API contract

In other words, it’s a CI safety net that actually runs everywhere.

Most teams don’t even have that.

Where It Falls Short

Let’s be blunt:

- It won’t replace unit tests

- It won’t replace integration tests

- It won’t replace contract testing

- It won’t understand your domain

If your system is complex, this is just one layer.

But it’s a layer that’s cheap and immediately useful.

The Real Shift

The interesting part isn’t Postman.

It’s this:

You can now describe testing infrastructure in plain English and get something usable back.

Before:

- Test suites + CI wiring took real effort

- Easy to postpone

Now:

- You can bootstrap it in minutes

- Refinement becomes the real work

That’s a big change.

Bottom Line

Postman + AI + CI isn’t magic.

But it’s one of those rare setups where:

- The barrier to entry is low

- The payoff is immediate

- And the failure mode is “you still learn something”

Use it as a safety net, not a crutch.

And when something feels off—don’t assume your tests are right.

They’re just another layer of code.

Leave a Reply